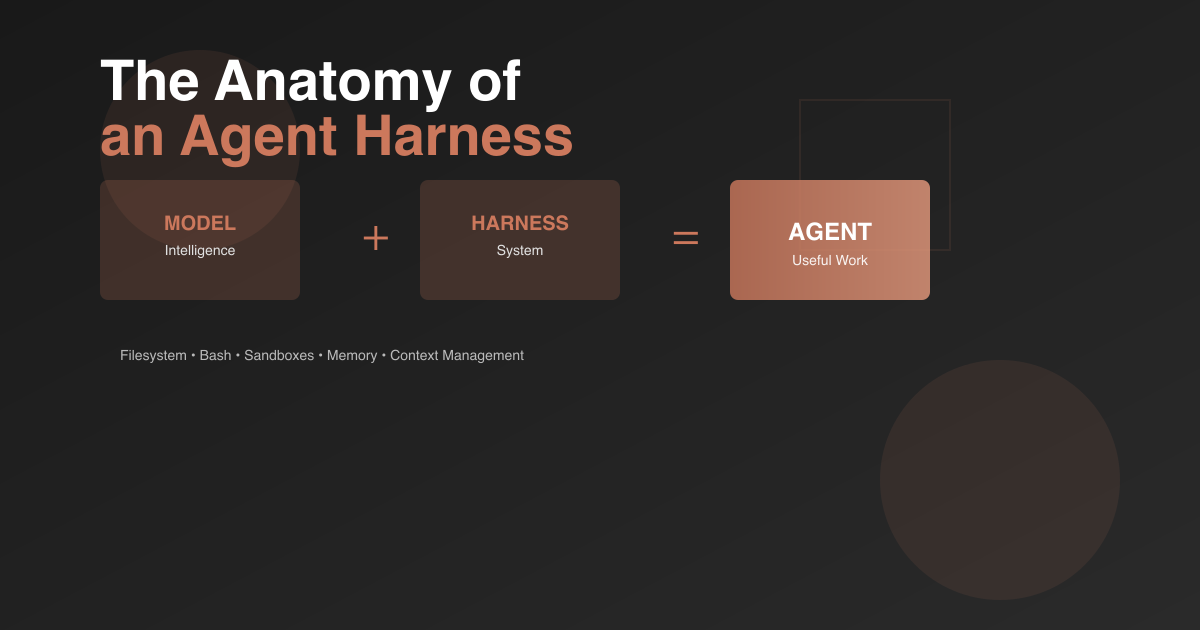

Agent = Model + Harness. While models get most of the attention, harness engineering is what transforms raw intelligence into practical work engines. LangChain’s deep dive explains why harnesses matter and what core components define modern agent systems.

The Harness Definition

A harness is everything that isn’t the model itself - the code, configuration, and execution logic that makes model intelligence useful. Think of it as the scaffolding around a raw LLM that gives it:

- State management across interactions

- Tool execution capabilities

- Feedback loops for self-correction

- Enforceable constraints for safety

Without a harness, you just have an API that generates text. With one, you have an agent that can accomplish real work.

Core Harness Components

Filesystem: The Foundation

The filesystem might be the most underrated harness primitive. It unlocks:

- Durable storage - Work persists across sessions

- Context offloading - Information that doesn’t fit in the context window

- Collaboration surface - Multiple agents and humans coordinate through shared files

Combined with git, agents get versioning to track progress, rollback errors, and branch experiments. This becomes crucial for long-horizon tasks where work spans multiple context windows.

Bash + Code: The Universal Tool

Instead of pre-building every possible tool, give agents bash and code execution. This shifts the paradigm from “fixed toolset” to “autonomous tool creation.”

Models trained on billions of tokens of code can write their own tools on the fly. A single bash tool provides more flexibility than hundreds of pre-configured API wrappers.

Sandboxes: Safe Execution Environments

Running agent-generated code locally is risky. Sandboxes provide:

- Isolated execution - Safe to run untrusted code

- Scalability - Spin up environments on demand

- Security controls - Allowlist commands, enforce network isolation

Good sandboxes come pre-configured with the right defaults - language runtimes, package managers, browsers for verification, CLIs for common tasks.

Memory & Search: Knowledge Extension

Models only know what’s in their weights and current context. Harnesses bridge this gap:

- Memory files like AGENTS.md get injected on startup for continual learning

- Web search provides access beyond the knowledge cutoff

- MCP tools like Context7 fetch up-to-date documentation

This transforms a static model into a system that can learn and access current information.

Battling Context Rot

As context windows fill up, model performance degrades. Harnesses combat this through:

- Compaction - Summarize and offload when approaching limits

- Tool output management - Keep only head/tail of large outputs, offload full data to filesystem

- Progressive disclosure - Skills and tools loaded just-in-time instead of all upfront

Without these strategies, agents would hit hard limits or degrade before completing complex tasks.

Long-Horizon Autonomous Execution

The holy grail: agents that complete complex software projects autonomously. This requires combining all the primitives:

The Ralph Loop Pattern: When a model tries to exit, the harness intercepts and reinjects the original prompt in a fresh context window, forcing continued work toward the goal. The filesystem makes this work - each iteration starts clean but reads state from the previous round.

Planning & Verification: Models decompose goals into steps, complete each one, then verify correctness through tests or self-evaluation. This grounds solutions in verifiable output rather than just “looks good to me.”

Shared Ledger: For multi-agent systems, the filesystem becomes a coordination mechanism where agents can see each other’s work and build collaboratively.

The Model-Harness Feedback Loop

Here’s where it gets interesting: modern agents like Claude Code and Codex are post-trained with both model AND harness in the loop. This creates co-evolution.

Useful harness primitives get discovered, then models are trained to use them natively. Over iterations, models become deeply coupled to specific harness patterns - sometimes to the point of overfitting.

Example: OpenAI’s Codex documentation notes that changing the apply_patch tool logic degrades performance, even though a truly general model shouldn’t be that sensitive to patch format.

But this doesn’t mean you should always use the “official” harness for a model. The Terminal Bench 2.0 leaderboard shows Claude Opus 4.6 scoring far better in custom harnesses than in Claude Code’s native harness for certain tasks. There’s significant juice to squeeze through harness optimization.

The Future of Harness Engineering

Some predict harnesses will matter less as models improve. That’s partially true - models will get better at planning, verification, and long-horizon coherence natively.

But just like prompt engineering remains valuable despite better models, harness engineering will continue to matter because it’s not just about patching deficiencies. It’s about engineering systems around intelligence to make it more effective.

Even a perfect model benefits from well-configured environments, the right tools, durable state, and verification loops.

Open Problems in Harness Research

LangChain’s team is exploring:

- Parallel agent orchestration - Hundreds of agents working on a shared codebase simultaneously

- Self-improving harnesses - Agents analyze their own traces to identify and fix harness-level failures

- Just-in-time assembly - Harnesses that dynamically load the right tools and context for each task instead of being pre-configured

These represent the next frontier: moving from static harness designs to adaptive systems that optimize themselves.

The Takeaway

The model contains the intelligence. The harness is the system that makes that intelligence useful.

Most agent discussions focus on model capabilities - which is important. But harness design determines whether that capability translates to completed work or just impressive demos.

As we push toward autonomous software creation and complex multi-step tasks, harness engineering becomes the practical bottleneck. The models are often ready; we just need better scaffolding to let them work effectively.

For anyone building agents: spend time on your harness. The defaults matter. The environment configuration matters. The verification loops matter. These aren’t auxiliary concerns - they’re what determines if your agent actually ships.

Watch James Manyika talk AI and creativity with LL COOL J.

Watch James Manyika talk AI and creativity with LL COOL J.

Click to load Disqus comments